Build a simple transcription app with Bubble.io and OpenAI

Take any audio file and turn it into written text in a snap, with Bubble.io and OpenAI

Hello fellow Bubblers,

This tutorial is a continuation of the previous tutorial on connecting Bubble to OpenAI. If you haven’t already read through that article, I recommend doing so first, since we will reference it later. You can access the previous tutorial using the link below:

How to Connect Bubble.io to OpenAI API

Hello fellow Bubblers, In this article we’re going to go over how to connect your Bubble app to OpenAI, to build a simple AI powered application. This tutorial will not require use of Bubble.io’s database. If exploring AI seems intimidating, rest assured that this step-by-step process breaks down the process into simple steps that will get you off the gro…

Getting Started

If you followed the previous tutorial, you should already have one call set up with OpenAI. We’ll add another call that is specific to the speech to text API. You can find more information about this API in OpenAI’s docs here

In your Bubble app, navigate to the Plugins tab and open up the API connector. Click on Add Another API, and label this new API OpenAI_Audio or whichever naming format will be easy to identify for you. The reason we’re creating a new API as opposed to a new call is because this new call requires a different type of Header. You could still do this in one API connection, but that would require creating separate headers for each call despite the fact that some may share the same information.

Input the following in the Header section:

Key: Content-Type Value: multipart/form-data

Key: Authorization Value: Bearer [Your API Key]

Next, open your first call and label it Transcribe. Change the type of call to Post and paste the following URL:

https://api.openai.com/v1/audio/transcriptions

Next, set the ‘use as’ to action and change the body type to form data. Then add 2 parameters, and label them as follows:

Key: model Value: whisper-1

Key: file Value: [Your audio file]

For the file parameter, you will need to upload a short audio file that you create. This will be used to initialize the call. You can create a short audio recording from your phone and save it to your computer. To upload it into this parameter, you will need to check the box that is labeled send file. You’ll then see the input label change to "upload”. Click on it, then upload your file. It should look as follows:

Make sure to uncheck the private box as well. This will enable you to allow users to upload files for transcription.

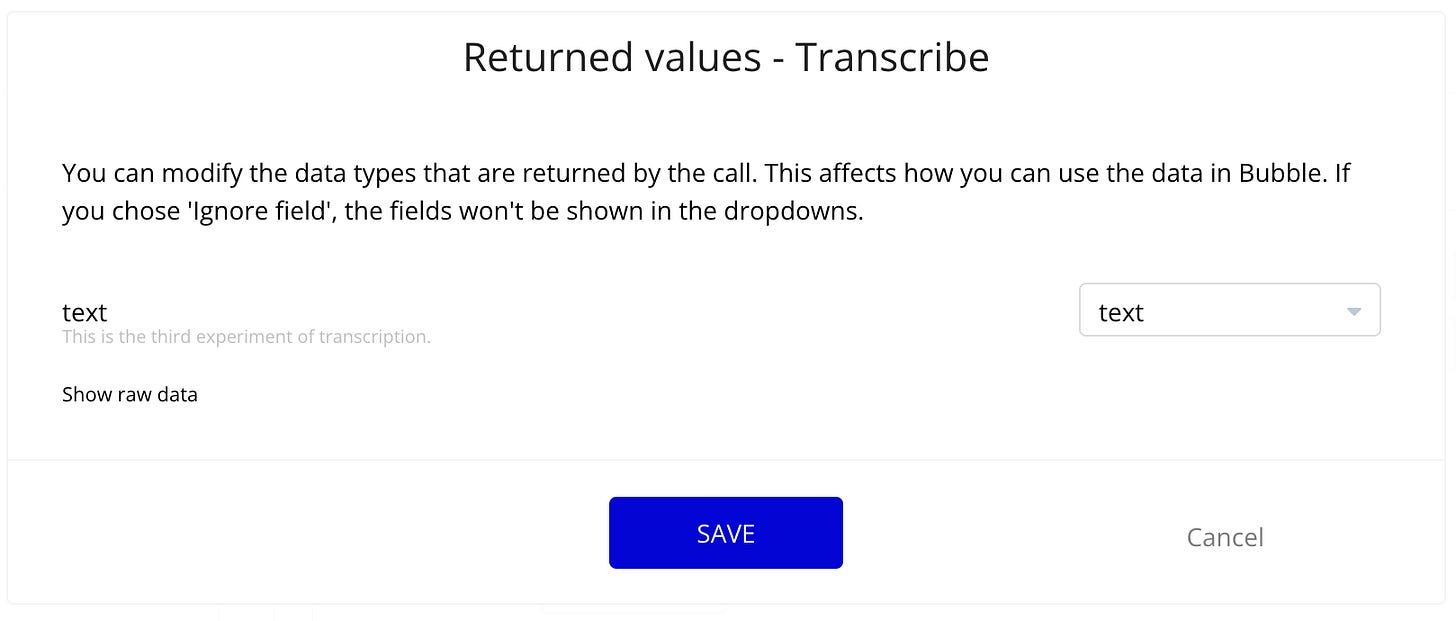

You can now initialize your call. The text field in the response will contain the transcription text of the recording you uploaded:

Building the frontend

We’re now going to design a page that allows a user to upload an audio file and send it to open AI for transcription. If you followed the previous OpenAI tutorial, you can duplicate the page we created there, and give it a name of your choosing. We’ll replace the multiline input with a file uploader, and label it ‘Upload your audio file’. Change the title to ‘Transcribe AI’, and change the button to ‘Transcribe’. You should have something that looks like this:

Lastly, change the label of the second button on the page to ‘New Audio’ instead of ‘New Question’.

Setting up the workflows

Next we’ll update the workflows for the Transcribe button. Select the Transcribe button then Edit Workflow. Delete any existing actions as we will be creating new ones.

For the first action, select your OpenAI call, in this case ‘OpenAI_Audio - Transcribe'. Then change the input to dynamic data and set it to reference the File uploader that was placed on the page. It should look as follows:

The next action should be to reset the relevant inputs, and the final action should be to set the state of the page, as it was in the previous tutorial. The value of the state should be ‘results of step 1’, which is the audio transcription result. This will be where the text is stored once the transcription is complete.

If you duplicated the page, you should not need to update any other workflows or states on your page. You should now have a working transcription app that allows users to upload audio files, which can then be turned into text.

I hope this article was helpful! If you found it useful, share it so other Bubblers can learn as well.

Happy Bubbling!

Shiku